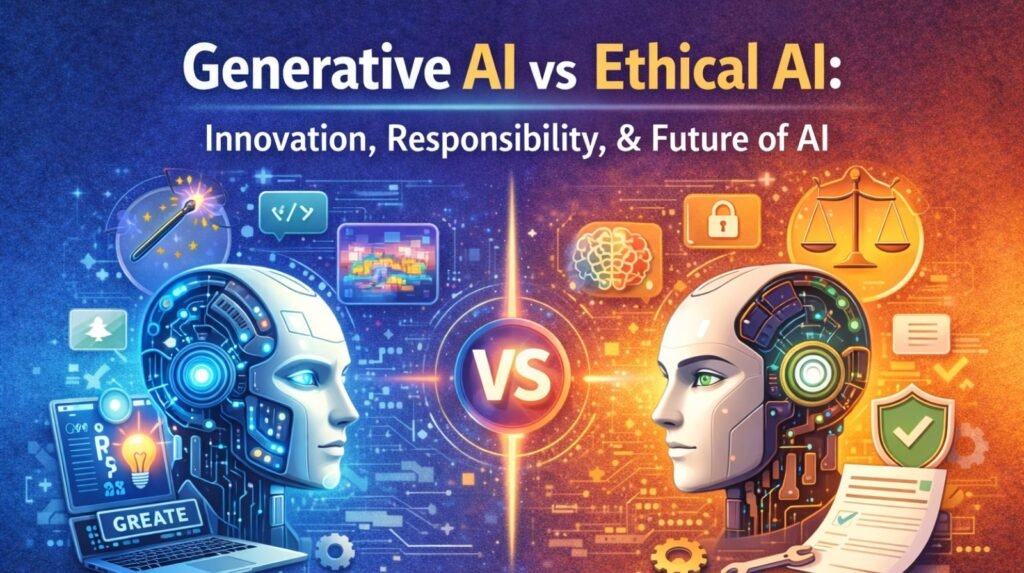

Artificial intelligence is no longer a distant promise — it is the engine quietly powering the tools we use every day. From the moment you unlock your phone with your face to the moment a chatbot resolves your customer service query at midnight, AI is at work. Across healthcare, finance, education, entertainment, and manufacturing, intelligent systems are diagnosing diseases, writing code, composing music, and generating marketing campaigns at a scale and speed that was simply unthinkable a decade ago. Yet the rapid rise of AI has raised a profound question: just because we can build these systems, does that mean we should — and on what terms? At the centre of this debate lie two interconnected but distinct ideas: Generative AI vs Ethical AI. The former is reshaping what machines can create; the latter is reshaping how we govern what they create. Understanding both — and how they interact — is essential for businesses, policymakers, developers, and everyday users navigating the Future of Artificial Intelligence.

What Is Generative AI?

Generative AI refers to a category of artificial intelligence systems designed to produce new content — text, images, audio, video, code, and more — by learning from vast datasets. Unlike traditional AI models that classify or predict based on existing data, generative models create something genuinely new.

The most prominent generative models today are built on architectures known as Large Language Models (LLMs) and diffusion models. LLMs like GPT-4 and Claude are trained on enormous corpora of text and learn to predict — and generate — contextually appropriate language. Diffusion models such as Stable Diffusion and DALL·E learn to reconstruct images from noise, enabling photorealistic image synthesis. Other architectures power video generation (Sora, Runway), voice cloning (ElevenLabs), and even molecule design for drug discovery.

Key Applications of Generative AI

- Content Creation & Marketing: Marketing teams use generative AI to draft blog posts, social media copy, email campaigns, and product descriptions in minutes. Tools like Jasper and Copy.ai have made AI-assisted writing a daily reality for thousands of businesses.

- Healthcare & Drug Discovery: Pharma companies leverage generative models to design novel protein structures and simulate drug interactions, dramatically shortening the R&D cycle. AI-generated medical summaries are also improving clinical documentation.

- Software Development: GitHub Copilot and similar tools autocomplete code, suggest entire functions, and explain complex logic — boosting developer productivity by an estimated 55% on certain tasks.

- Creative Industries: Musicians, filmmakers, and game designers use generative AI to prototype compositions, generate visual assets, and create narrative branches at scale.

- Customer Experience & Automation: Generative AI powers next-generation chatbots and virtual assistants capable of nuanced, multi-turn conversations, transforming customer support and internal knowledge management.

The economic scale of this transformation is staggering. McKinsey estimates that generative AI could add between $2.6 trillion and $4.4 trillion annually to the global economy — a figure that underscores why so many enterprises are racing to integrate it.

What Is Ethical AI?

Ethical AI — sometimes called Responsible AI — is a framework of principles, practices, and governance mechanisms designed to ensure that AI systems are developed and deployed in ways that are fair, transparent, accountable, and safe. It is not a specific technology; it is a philosophy applied to technology.

AI Ethics emerged as a discipline precisely because AI systems, if left unchecked, can inherit and amplify the biases embedded in their training data, erode privacy, generate dangerous misinformation, and concentrate power in ways that are harmful to society. Ethical AI seeks to prevent these outcomes by asking: who benefits from this system, who might be harmed, and how do we ensure accountability?

Core Pillars of Ethical AI

- Fairness: AI systems must not discriminate on the basis of race, gender, age, disability, or other protected characteristics. This requires auditing training data for historical bias and designing evaluation metrics that measure fairness across demographic groups.

- Transparency: Users and regulators should be able to understand how an AI system reaches its conclusions. Explainability tools and model documentation (such as Model Cards) are key instruments here.

- Accountability: When an AI system causes harm, there must be a clear chain of responsibility — developers, deployers, and operators each have a role to play. Accountability frameworks define those roles explicitly.

- Privacy: Responsible AI requires that personal data be collected and processed lawfully, with informed consent, and that models do not inadvertently memorise or expose sensitive information.

- Safety & Robustness: AI systems should perform reliably under adversarial conditions and fail gracefully when they encounter inputs outside their training distribution.

- Human Oversight: High-stakes AI decisions — in criminal justice, healthcare, credit scoring — should involve meaningful human review rather than fully automated determination.

Generative AI vs Ethical AI: Key Differences

It is important to understand that Generative AI and Ethical AI are not rivals — they are complementary forces. However, they operate on very different planes.

| Dimension | Generative AI | Ethical AI |

| Primary Focus | Creating new content and automating tasks | Ensuring AI is fair, safe, and accountable |

| Core Goal | Innovation and productivity gains | Responsible development and harm prevention |

| Key Technologies | LLMs, diffusion models, GANs, transformers | Bias detection, explainability tools, governance frameworks |

| Main Stakeholders | Developers, businesses, end users | Regulators, ethicists, civil society, developers |

| Risk Profile | Misinformation, copyright, deepfakes | Discrimination, opacity, surveillance |

| Regulatory Status | Emerging regulations (EU AI Act, US EO) | Incorporated into law and corporate policy |

Think of Generative AI as the engine of a powerful vehicle, and Ethical AI as the combination of traffic laws, seatbelts, and licensing requirements. You need the engine to move; you need the safety systems to ensure the journey does not end in catastrophe.

Key Challenges of Generative AI

The enormous promise of Generative AI comes with equally significant risks. Understanding these challenges is the first step toward mitigating them.

1. Misinformation and Deepfakes

Generative AI has made it trivially easy to produce convincing fake text, images, audio, and video. Deepfakes — synthetic media that place real people in fabricated scenarios — pose acute risks to political discourse, reputations, and public trust. During elections, AI-generated disinformation has already been used to manipulate voters. Combating this requires both technical solutions (watermarking, provenance tracking) and legal frameworks.

2. Copyright and Intellectual Property

Generative AI models are trained on massive datasets that frequently include copyrighted works — books, artworks, source code, and news articles — often without the explicit consent of creators. This has triggered a wave of lawsuits from artists, authors, and publishers. The legal landscape remains unsettled, but it is clear that Responsible AI development requires licensing frameworks that respect intellectual property rights.

3. Data Privacy Concerns

Training data often contains personal information, and generative models can inadvertently memorise and reproduce this data. Regulation such as the GDPR enshrines the right to be forgotten — a principle that is technically complex to implement in large neural networks. Additionally, enterprises deploying generative AI tools must be cautious about the data employees input into these systems.

4. AI Bias and Discrimination

Because generative models learn from human-generated data, they reflect human biases. These biases can manifest in generated content — for example, models that default to depicting doctors as male or criminals as members of minority groups. Without rigorous bias auditing and diverse training data, Generative AI risks perpetuating and scaling systemic inequalities.

5. The Need for AI Governance and Regulation

Governments worldwide are scrambling to regulate AI. The European Union’s landmark AI Act — the world’s first comprehensive AI regulation — classifies AI applications by risk level and imposes strict obligations on high-risk uses in healthcare, law enforcement, and critical infrastructure. The United States has issued executive orders and established AI Safety Institutes. China has implemented regulations on generative AI content. Businesses must keep pace with this rapidly evolving regulatory landscape to remain compliant and competitive.

How Businesses Can Implement Responsible AI Practices

Embracing Responsible AI is not simply a matter of compliance — it is increasingly a source of competitive advantage and brand trust. Here are practical steps organisations can take:

- Establish an AI Ethics Policy: Define clear principles for AI development and deployment within your organisation, covering fairness, transparency, privacy, and accountability.

- Conduct Regular Bias Audits: Before deploying any AI model, rigorously test it across demographic groups to identify and correct discriminatory outputs.

- Implement Data Governance Frameworks: Know what data you collect, where it is stored, how it is used, and how long it is retained. Ensure compliance with applicable privacy regulations.

- Maintain Human Oversight: Particularly for high-stakes decisions, ensure that AI recommendations are reviewed by qualified humans before action is taken.

- Invest in Explainable AI (XAI): Adopt tools and techniques that make AI decisions interpretable to non-technical stakeholders, including regulators and customers.

- Engage with the Broader Ecosystem: Participate in industry consortia, engage with regulators proactively, and stay informed about evolving AI Ethics standards.

The Future of Artificial Intelligence: Balancing Innovation and Responsibility

The trajectory of the Future of Artificial Intelligence will be shaped not by any single breakthrough, but by the choices made today about how to govern these technologies. The organisations and governments that take AI Ethics seriously — not as a compliance checkbox, but as a genuine design principle — will build AI systems that are more trustworthy, more durable, and ultimately more valuable.

We are entering an era of multimodal, agentic AI — systems that can see, hear, reason, and act across complex environments with minimal human instruction. The stakes of getting the ethics right have never been higher. Autonomous AI agents making decisions in financial markets, healthcare systems, or national infrastructure must be held to the highest standards of safety, transparency, and accountability.

At the same time, we must resist the temptation to allow ethical concerns to become a pretext for stifling beneficial innovation. The goal is not to slow AI down, but to steer it wisely. Generative AI vs Ethical AI, working in tandem, represent the most powerful combination in modern technology: the ability to create at scale, guided by the wisdom to do so responsibly.