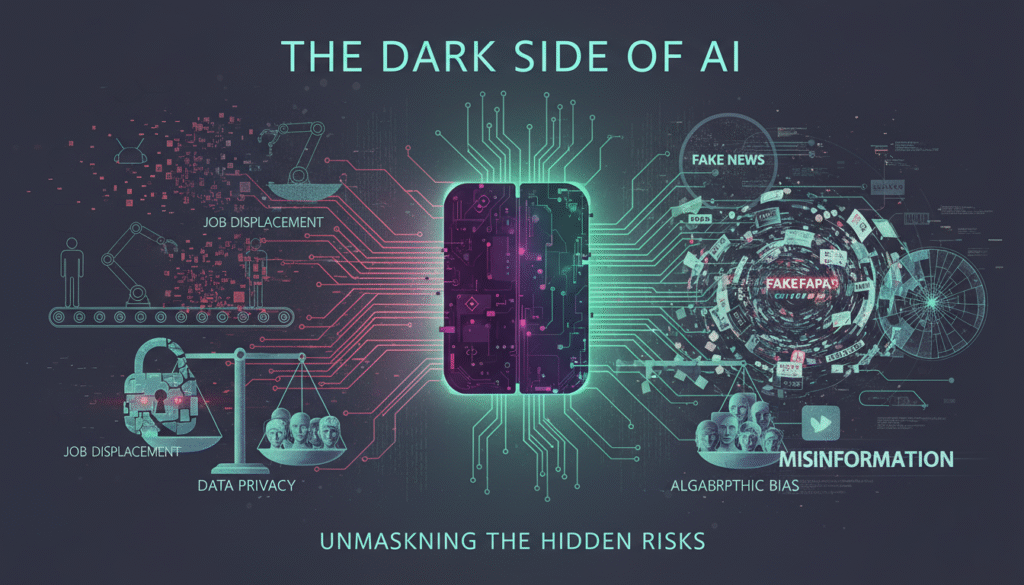

As we delve deeper into the age of technology, the becomes increasingly apparent. While artificial intelligence promises efficiency and innovation, it also harbors risks that are often overlooked. Understanding these risks is crucial for individuals and businesses alike, as the implications of AI technology extend far beyond mere convenience. This blog will navigate through the less-discussed dangers of Dark Side of AI, including systemic job displacement, data privacy erosion, algorithmic bias, misinformation, ethical implications of deepfakes, and the dangers of over-reliance on AI.

Understanding the Dark Side of AI

The encompasses a range of risks that can significantly impact society. As AI technology advances, it is essential to recognize both its potential benefits and the associated dangers. Recent insights indicate that while AI can enhance productivity, it can also lead to unforeseen consequences that affect various aspects of life.

Businesses leveraging AI technology advancements should be aware of these hidden risks. From ethical dilemmas to technical vulnerabilities, understanding the implications of AI is vital for responsible usage. This section will explore the various categories of risks associated with AI, setting the stage for a deeper analysis.

The Impact of AI on Society

AI’s integration into daily life is profound, influencing everything from personal assistants to complex decision-making systems. However, this rapid adoption raises questions about societal readiness to handle the consequences. Many businesses report a lack of frameworks to address ethical concerns, which can lead to public distrust.

The Need for Ethical Guidelines

As AI systems become more autonomous, establishing ethical guidelines is paramount. These guidelines should address accountability, transparency, and fairness in AI applications. Without a clear ethical framework, the risks associated with AI may escalate, leading to societal backlash.

Systemic Job Displacement

One of the most pressing is systemic job displacement. As automation becomes more prevalent, many industries are experiencing significant changes in their workforce dynamics. In 2026, insights reveal that an estimated 30% of jobs could be at risk due to AI and automation, particularly in sectors like manufacturing, retail, and customer service.

Businesses should be prepared for job market changes due to AI and consider strategies for workforce transition. The following points highlight the impact of AI on employment:

Job Loss in Vulnerable Sectors

Certain sectors are more susceptible to AI-induced job loss. For instance, routine and manual tasks are increasingly being automated, leading to significant workforce reductions. This trend raises concerns about the future of employment and the need for retraining programs.

The Role of Upskilling

To mitigate job displacement, upskilling and reskilling initiatives are essential. Companies must invest in training programs that equip employees with skills relevant to an AI-driven economy. This proactive approach can help workers adapt to new roles, ensuring they remain competitive in the job market.

Pervasive Data Privacy Erosion

With the rise of AI, data privacy issues have become a critical concern. As AI systems require vast amounts of data to function effectively, the potential for privacy erosion increases. In 2026, insights suggest that nearly 60% of consumers are worried about how their data is being used by AI technologies.

Organizations must navigate the complexities of data privacy challenges while implementing AI solutions. The following aspects highlight the significance of data privacy in the age of AI:

Increased Surveillance

AI technologies often rely on surveillance data to enhance their functionality. This trend raises ethical questions about consent and the extent to which individuals are monitored. Balancing the benefits of AI with privacy rights is a delicate challenge that organizations must address.

Data Breaches and Security Risks

As AI systems become more integrated into business operations, the risk of data breaches escalates. Organizations must prioritize cybersecurity measures to protect sensitive information from unauthorized access. Failure to do so can result in significant financial and reputational damage.

Algorithmic Bias Amplification

Algorithmic bias is another critical concern within the . AI systems can inadvertently perpetuate existing biases present in the data they are trained on. In 2026, studies indicate that 40% of AI practitioners acknowledge the potential for bias in their algorithms, which can lead to unfair outcomes.

Understanding algorithmic fairness issues is vital for developing equitable AI systems. The following points illustrate the implications of algorithmic bias:

Discrimination in Decision-Making

AI algorithms are increasingly used in decision-making processes, from hiring to loan approvals. If these algorithms are biased, they can lead to discriminatory practices that disproportionately affect marginalized groups. This highlights the need for transparency in AI development.

The Importance of Diverse Data

To combat algorithmic bias, it is crucial to use diverse and representative datasets during the training process. Ensuring that AI systems are exposed to a wide range of perspectives can help mitigate bias and promote fairness in outcomes.

Sophisticated Misinformation & Propaganda

AI technologies have the potential to amplify misinformation and propaganda, posing significant risks to public discourse. In 2026, insights reveal that AI-generated content is becoming increasingly sophisticated, making it challenging for individuals to discern fact from fiction.

Businesses must be aware of the implications of misinformation spread by AI and take proactive measures to address this issue. The following aspects highlight the dangers of AI-generated misinformation:

The Role of Deepfakes

Deepfake technology, powered by AI, can create hyper-realistic videos that misrepresent individuals and events. This poses ethical concerns and can lead to reputational harm for individuals and organizations alike. Understanding the implications of deepfakes is crucial in a world where misinformation can spread rapidly.

Combating Misinformation

To combat the spread of misinformation, organizations should invest in media literacy programs and promote critical thinking skills among consumers. Encouraging individuals to verify sources and question information can help mitigate the impact of AI-generated misinformation.

Ethical Implications of Deepfakes

The rise of deepfake technology brings forth significant ethical concerns. While deepfakes can be used for entertainment purposes, they can also be weaponized to manipulate public opinion and spread false narratives. In 2026, the ethical implications of deepfakes are at the forefront of discussions surrounding AI.

Potential for Manipulation

Deepfakes can be used to create misleading content that can influence elections, public opinion, and social movements. Understanding the potential for manipulation is essential for individuals and organizations alike to navigate the complexities of digital content.

Legal and Regulatory Challenges

As deepfake technology evolves, legal frameworks must adapt to address the associated risks. Policymakers face the challenge of creating regulations that protect individuals from malicious use while not stifling innovation in Dark Side of AI.

Over-reliance and AI Dependency

The increasing reliance on AI systems poses risks of dependency that can have far-reaching consequences. As organizations integrate AI into their operations, there is a danger of becoming overly reliant on these technologies. In 2026, insights suggest that 50% of businesses express concern about their dependency on AI for critical decision-making.

The Risks of Automation

Over-reliance on AI can lead to a lack of human oversight in decision-making processes. This can result in errors and unintended consequences that may not be immediately apparent. Organizations must strike a balance between leveraging AI and maintaining human judgment in critical areas.

Preparing for AI Failures

Organizations should develop contingency plans to address potential AI failures. Understanding the limitations of AI systems and preparing for unexpected outcomes can help mitigate risks associated with over-dependence.

Conclusion

The encompasses a range of risks that require careful consideration. From job displacement to data privacy erosion, algorithmic bias, misinformation, and ethical concerns, the implications of AI technology are profound. As we navigate this technological landscape, it is essential to prioritize ethical guidelines, transparency, and accountability in Dark Side of AI.

By understanding these risks, individuals and organizations can take proactive measures to mitigate the dangers associated with AI. Embracing a balanced approach to AI technology will ensure that its benefits can be harnessed while minimizing potential harm.